Biosensing Music

Role

UX & UI Designer

Tools

Figma

Duration

Figma

Team

Project Manager

UX & UI Designer

Figma

Figma

Project Manager

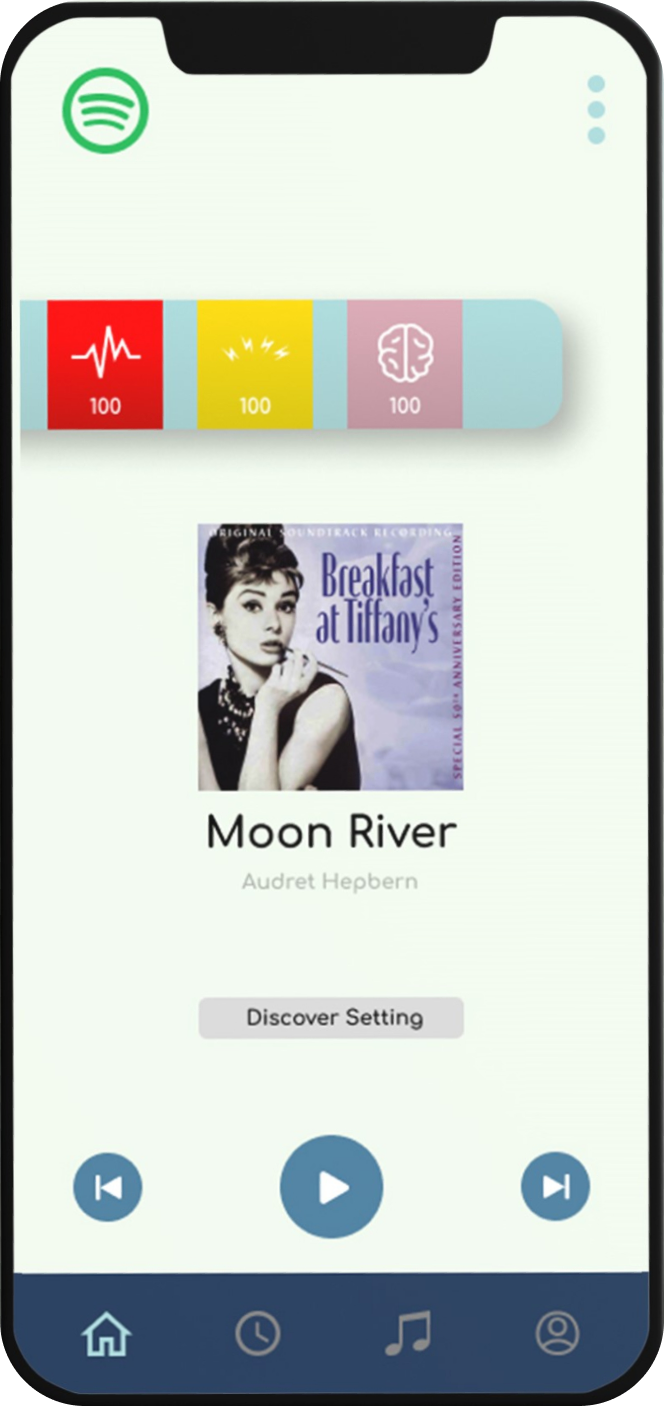

A speculative UX research and product design project exploring how physiological responses to music can reveal deeper, unconscious preferences.

Music profoundly affects the human body and mind — yet most streaming platforms rely solely on behavioral data like skips or replays to infer preferences. What if we could understand someone's music taste by measuring how their body feels during listening, rather than what they say they like?

This project explores the intersection of biometric sensing and emotional design: using heart rate, brainwaves, stress markers, and pupil dilation to map a listener’s true emotional response to music.

While self-initiated, this project imagined future collaboration with:

Frameworks from behavioral science, biometric UX, and affective computing were used to validate the design direction.

How might we reveal a user’s authentic music preferences by decoding their physiological reactions — beyond likes, skips, or playlists?

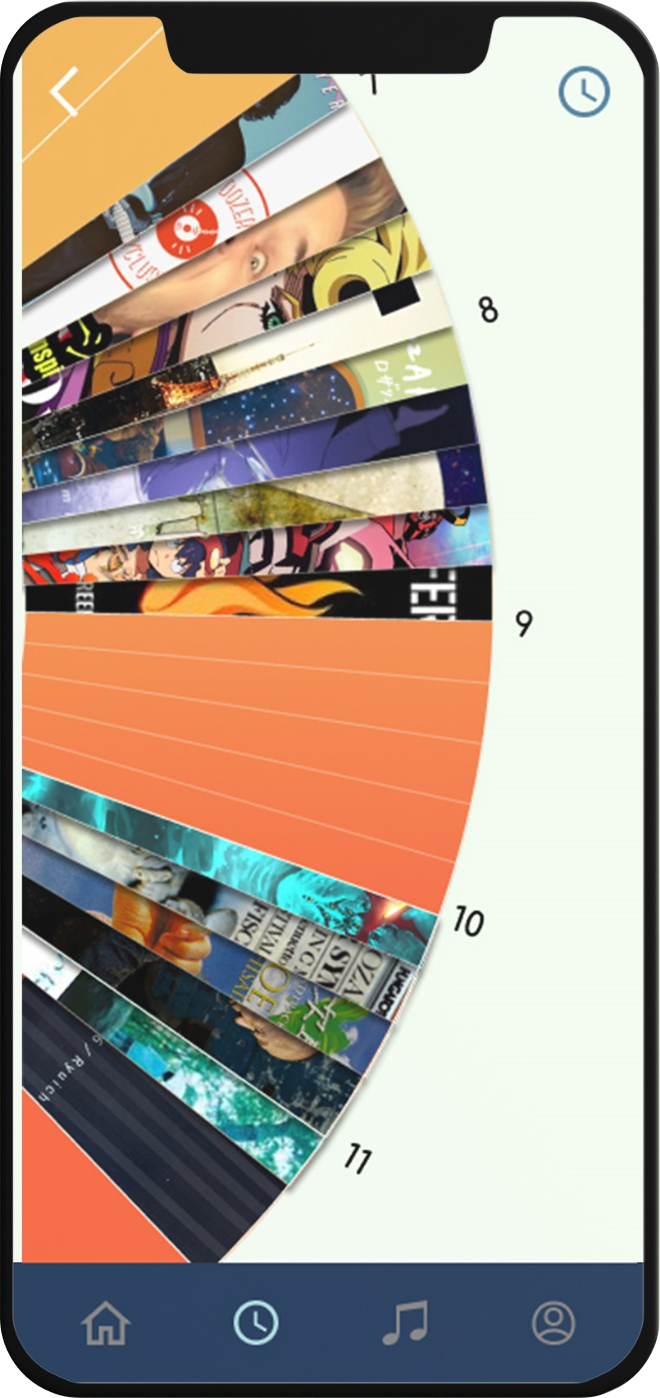

A chronological interface where users can see what music they listened to and how their body responded — in real time. This moves beyond play counts or likes, letting users reflect on how music actually made them feel physiologically.

For each song played, the timeline visualizes biometric changes using colored overlays:

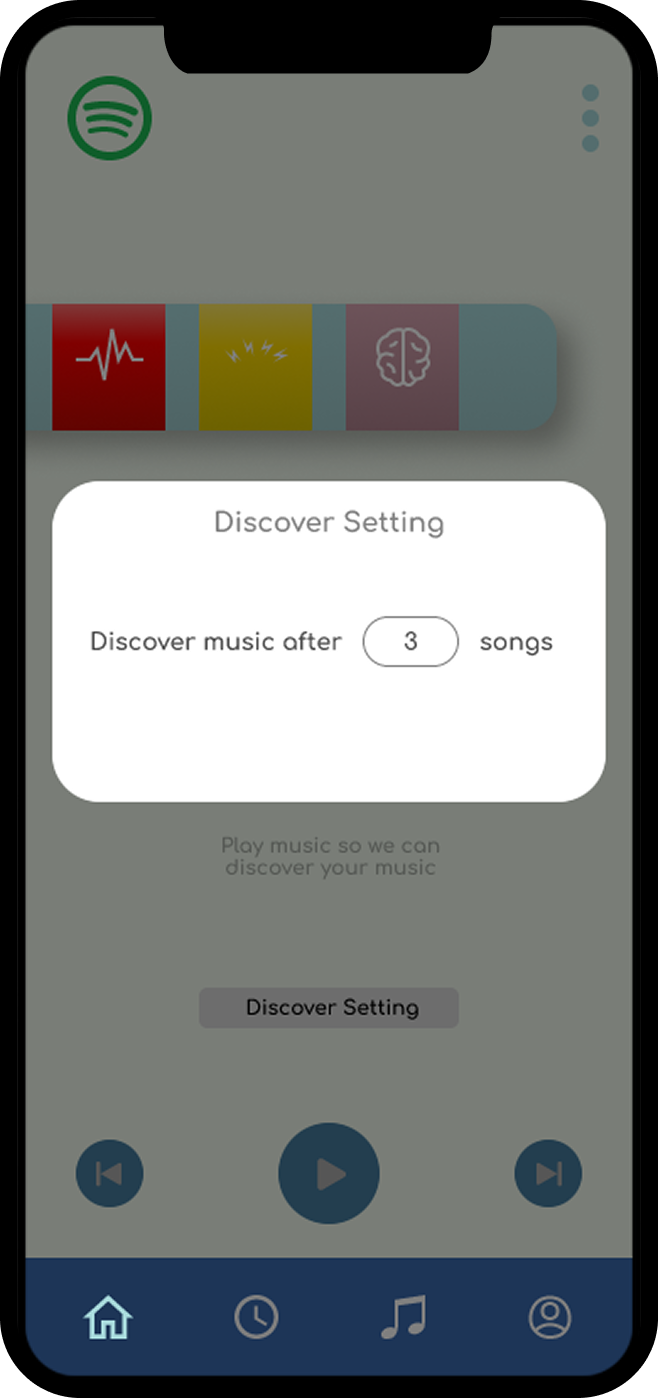

Instead of using genres, this recommendation engine finds music that resonates with a user’s biological response. For example:

Designing for the Invisible

This project pushed me to work with signals users don’t see or verbalize — like brainwaves or pupil dilation — and translate them into intuitive UX.

From Data to Meaning

Interpreting raw signals (like alpha suppression or salivary cortisol) into emotional insight required metaphor, context, and deep empathy.

Tech that Feels

Bio-sensing interfaces have the potential to deepen self-awareness. I now think more about software as an extension of emotional intelligence.

Music is more than preference — it’s embodied emotion. This project imagines a future where our tools don't just follow our clicks, but understand how we feel.