Discovery fails not because games are undiscoverable, but because trust signals are absent.

Steam's algorithm optimizes for velocity. Established titles absorb new traffic; small studios without existing audiences stay invisible. Players get volume, not decision-useful signals. Publishers operate blind: identifying promising titles before committing to deals requires engagement data that is either locked inside developer accounts or nonexistent.

Over 10,000 games are released on Steam annually. Discovery failure is the primary commercial failure mode for small studios, outweighing poor game quality as a cause of low sales. The gap is not traffic. It is that no surface makes early credibility visible to the right audience at the right time. PlayFirst is designed around that gap.

Define the architecture before designing the interface.

This is an ongoing concept project. Screens reflect an earlier design state. The structural constraints were real: 0-to-1 architecture without a user base or live data meant every decision was a structural bet without empirical confirmation. The chicken-and-egg bootstrapping problem required an architectural solution, not a UI-layer fix.

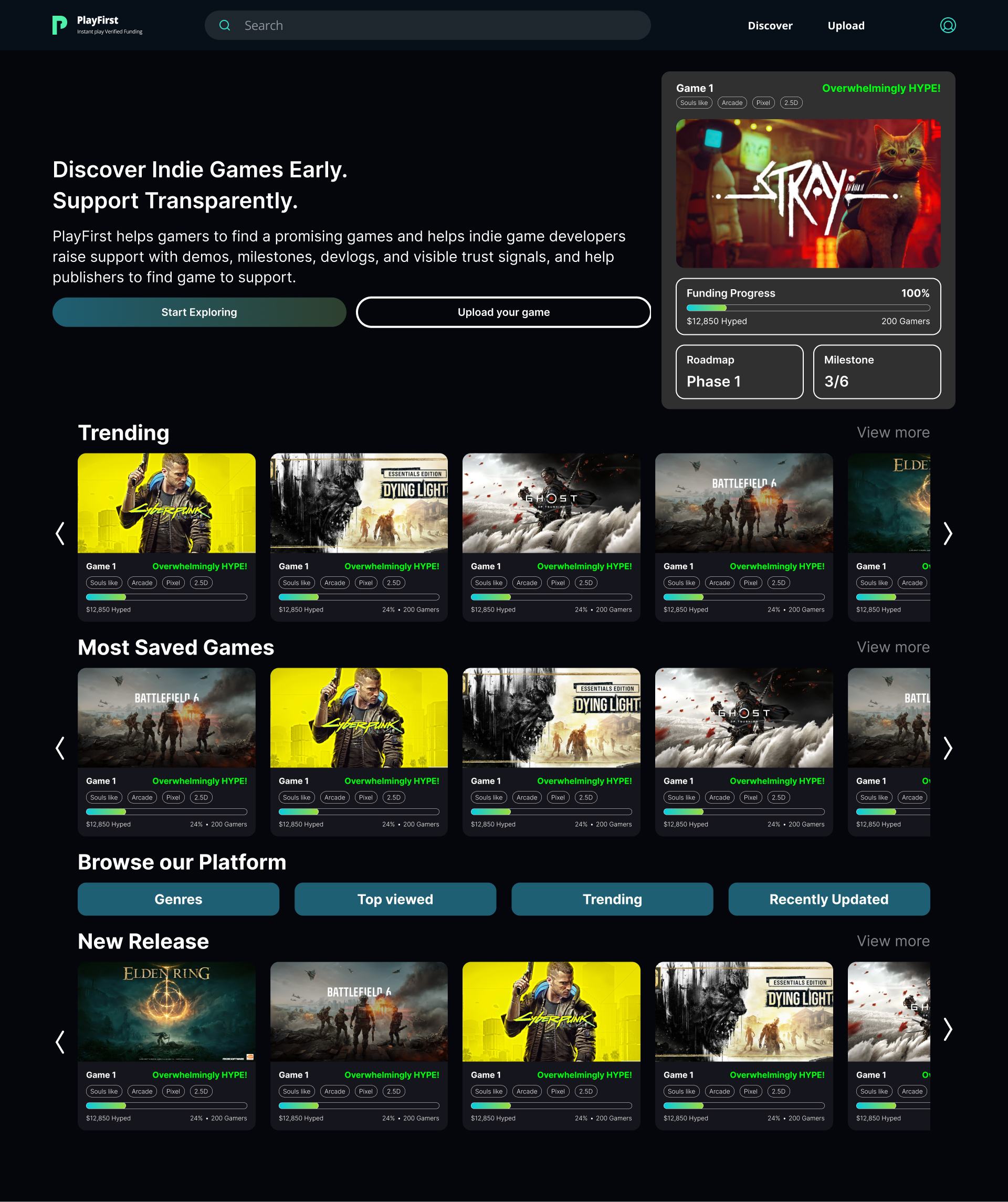

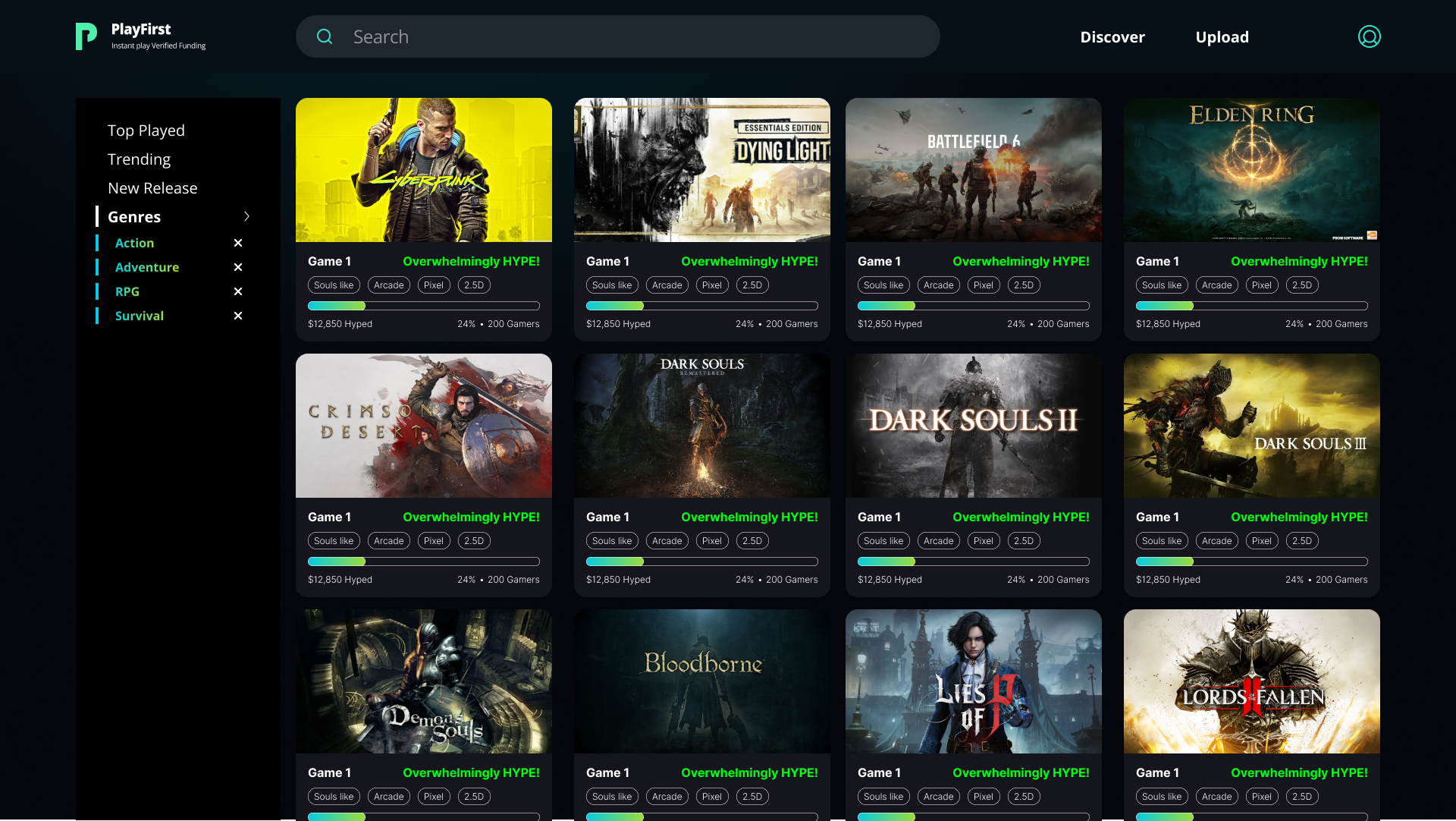

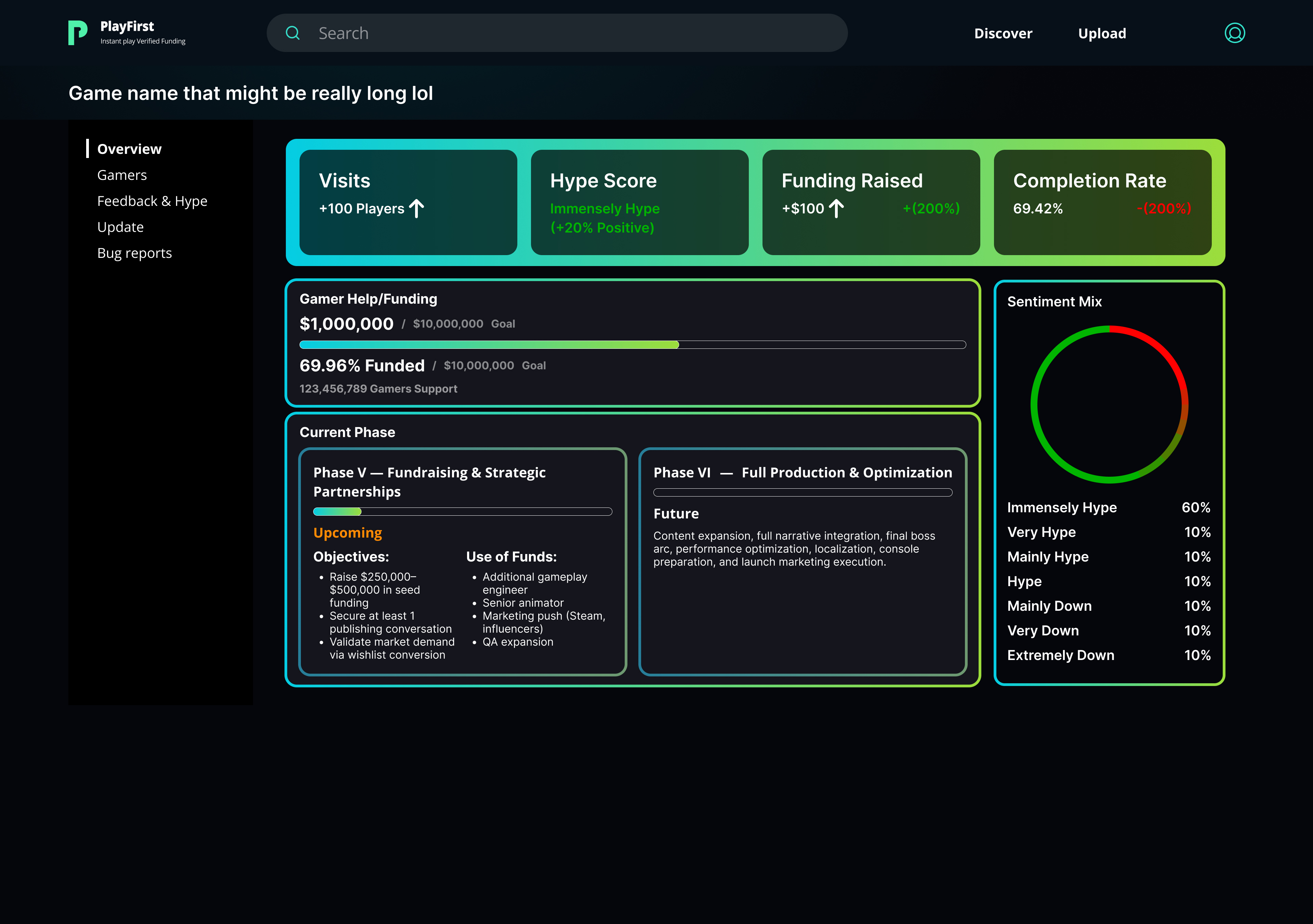

Current iteration priorities: before-login and after-login discovery states as intentional product architecture; structured game page creation as trust formation infrastructure; developer analytics as early-readiness tooling, not vanity reporting; review logic producing decision-useful signals rather than aggregate sentiment.

Key inputs.

Competitive analysis of Steam, itch.io, and Epic Games Store discovery and trust-signal patterns. Benchmarking of publisher evaluation processes at indie-focused publishers through GDC talks and public fund documentation. Analysis of crowd-funding-adjacent trust dynamics from Kickstarter and Fig. Secondary research on community-driven discovery and its relationship to early commercial outcomes.

- 01 Distribution leverage is asymmetric, not absent. Developers with existing audiences consistently reach visibility thresholds. The architecture must create a path to equivalent reach for studios starting without an audience, or it does not solve the core problem.

- 02 Publishers evaluate on structured early signal, not polished demos. Time played, save rates, hype trajectory, and early funding momentum predict commercial viability better than build quality at the pitch stage. Structured signal visibility is a product feature, not a reporting tool.

- 03 Trust converts players more reliably than algorithm rank. Visible community traction, clear roadmap commitment, and transparent funding use create stronger support decisions than recommendation-weighted feeds alone. Trust is not a soft outcome; it is a conversion mechanism.

Four decisions that shaped the architecture.

The updated product rationale organizes around four structural decisions: one canonical game page as a shared trust surface for all three audience types; structured upload fields that make game pages credible and supportable by design; developer analytics positioned as audience-readiness infrastructure rather than vanity metrics; and review logic designed to produce constructive, decision-useful signals. The before-login and after-login discovery states are intended product architecture, not a session management detail. Before-login discovery must convert on trust signals alone.

Selected interfaces.

What this proves and what it doesn't.

- The structural argument for shared trust surfaces outperforming fragmented role-separated products is supported by platform economics research. Engagement concentration creates compounding value; fragmentation destroys it before network formation.

- Structured early-signal evaluation is already used by publisher-adjacent systems at scale. App Store editorial selection and Devolver Digital's public discovery process validate the pull-based evaluation model structurally.

- Crowd-funding-adjacent trust patterns validate that visible funding progress, roadmap commitment, and milestone transparency convert support decisions. Kickstarter and Fig demonstrate this at meaningful scale.

- Whether publishers would invest in active evaluation before developer volume reaches a meaningful threshold. This is the riskiest activation assumption in the model and cannot be validated without live publisher behavior.

- Whether structured upload fields reduce or increase developer churn at onboarding. Higher setup effort is a real activation risk even when the payoff is higher page credibility.

The structural decisions are the work.

The most important design decisions in this project are not visible in the screens. They are in the architecture: one page serving three audiences, structured fields that create credibility rather than entry forms that collect data, analytics framed as readiness rather than vanity, review logic that produces signal rather than sentiment. Each of these required defending against simpler alternatives that would have been faster to design but would have failed the core problem.

The ongoing evolution of this project has clarified something the earlier version did not make explicit: trust is the product. Discovery, analytics, reviews, and upload structure are all trust formation systems. If the product does not build trust at each interaction point, the network cannot form. Getting that framing right earlier would have changed the sequencing of every design decision that followed.