The interface design requirements are the real problem, not the technology.

A design research project exploring whether passively collected biometric signals could meaningfully improve the emotional accuracy of music recommendation systems. The core question is not whether the technology is feasible (wearable sensors make it technically tractable), but whether the interface design requirements for transparent, trustworthy biometric-aware recommendations can be met without creating the surveillance anxiety that causes user abandonment.

Music recommendation systems are accurate at predicting genre preference and listening habit patterns. They are poor at detecting current emotional state. A user who has listened to ambient music for focus for three years gets ambient music recommended during a workout, because historical pattern dominates. Biometric signals could close this gap, but no mainstream streaming service has implemented them. The barrier is not technical: it is interface design. Most biometric products fail not because the sensor data is wrong but because the interface fails to explain what is being done with it.

Spotify, Apple Music, and YouTube Music collectively serve 700M+ users with recommendation systems that are technically sophisticated but emotionally static. Biometric integration is technically feasible with current wearable sensors. The barrier is a design problem: users will not accept recommendation systems they cannot explain or control. This project identifies what the transparent biometric recommendation interface requirements are. That is a prerequisite for any product that wants to enter this space. Getting the control architecture right matters more than getting the signal processing right.

Research boundaries.

Design research only. No live sensor integration was available, which means all interface concepts are based on simulated biometric state rather than real sensor data. Consumer-facing biometric features face significant regulatory and privacy considerations (GDPR, CCPA, HIPAA-adjacent concerns) that constrain product decisions independent of design quality. Critically: correlation is not causation. The interface must communicate uncertainty honestly without undermining the feature's value proposition. This is a design communication challenge with no clean solution.

Key inputs.

Literature review of biometric-mood correlation research (HRV and mood studies, galvanic skin response and arousal state correlations). Competitive analysis of biometric integration patterns in fitness apps: Oura, Whoop, Apple Health. Analysis of user trust research in recommendation system transparency contexts.

- 01 Biometric-mood correlations are real but highly individual. Population-level correlations exist; elevated HR correlates with arousal states, but individual calibration is required for actionable recommendation accuracy. One-size population models produce unreliable outputs for individual users.

- 02 Transparency is not optional. Users who cannot explain why a recommendation was made abandon the feature. In biometric contexts, the black-box problem is more severe than in behavioral recommendation contexts because the inputs feel more personal.

- 03 Control must be immediate and surfaced. Users need a one-tap mechanism to pause biometric influence at any moment. A buried control produces the anxiety it was meant to prevent; users who cannot easily exit the feature disengage from the feature entirely.

Three decisions that shaped the framework.

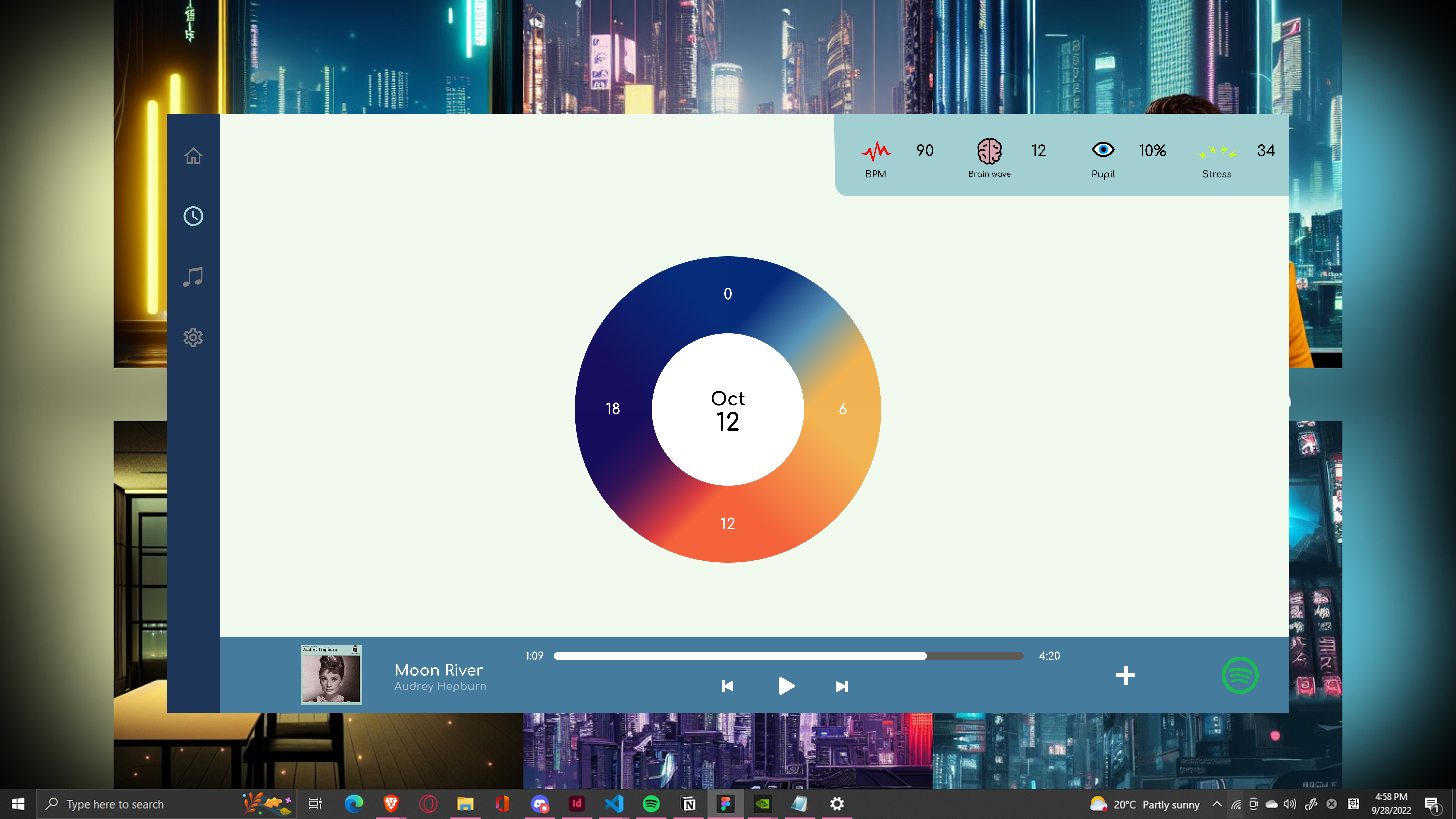

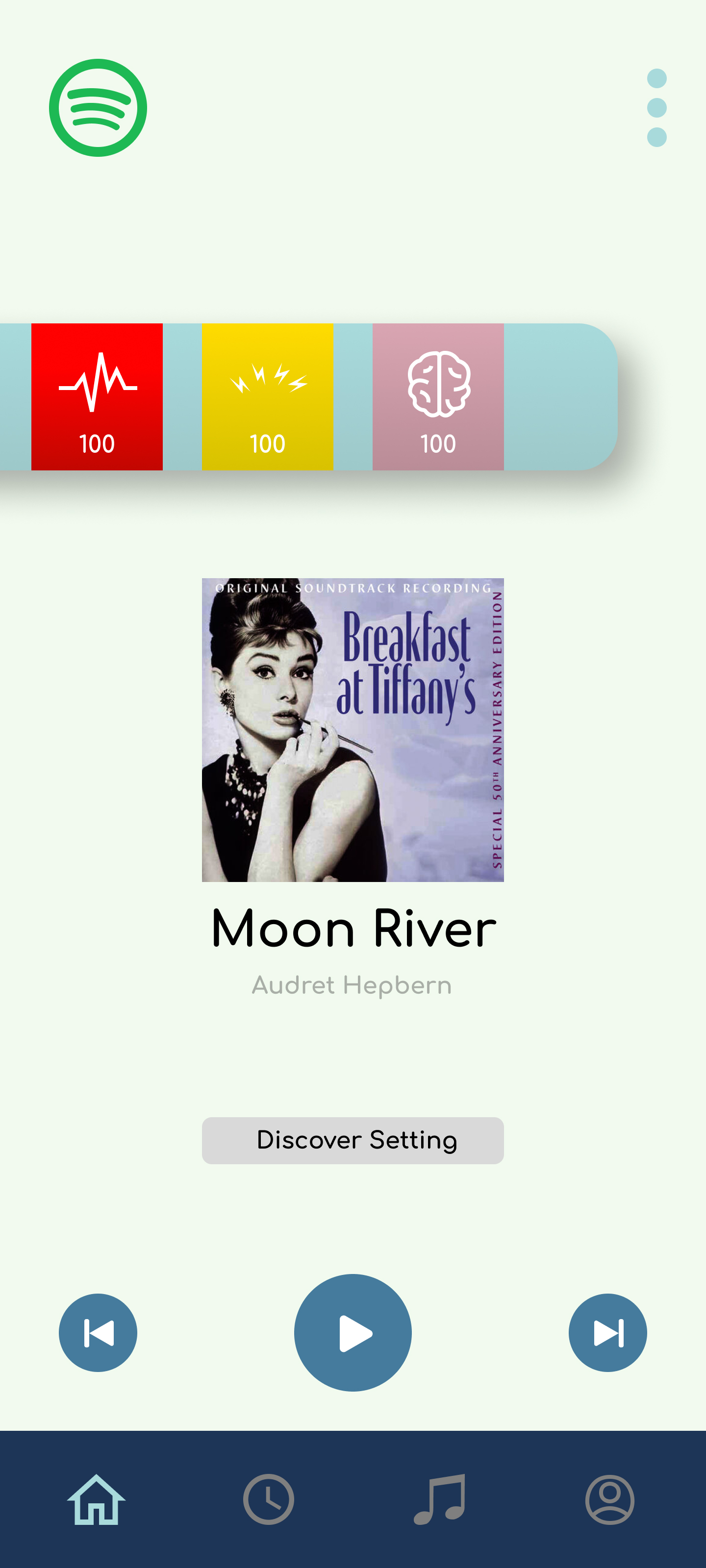

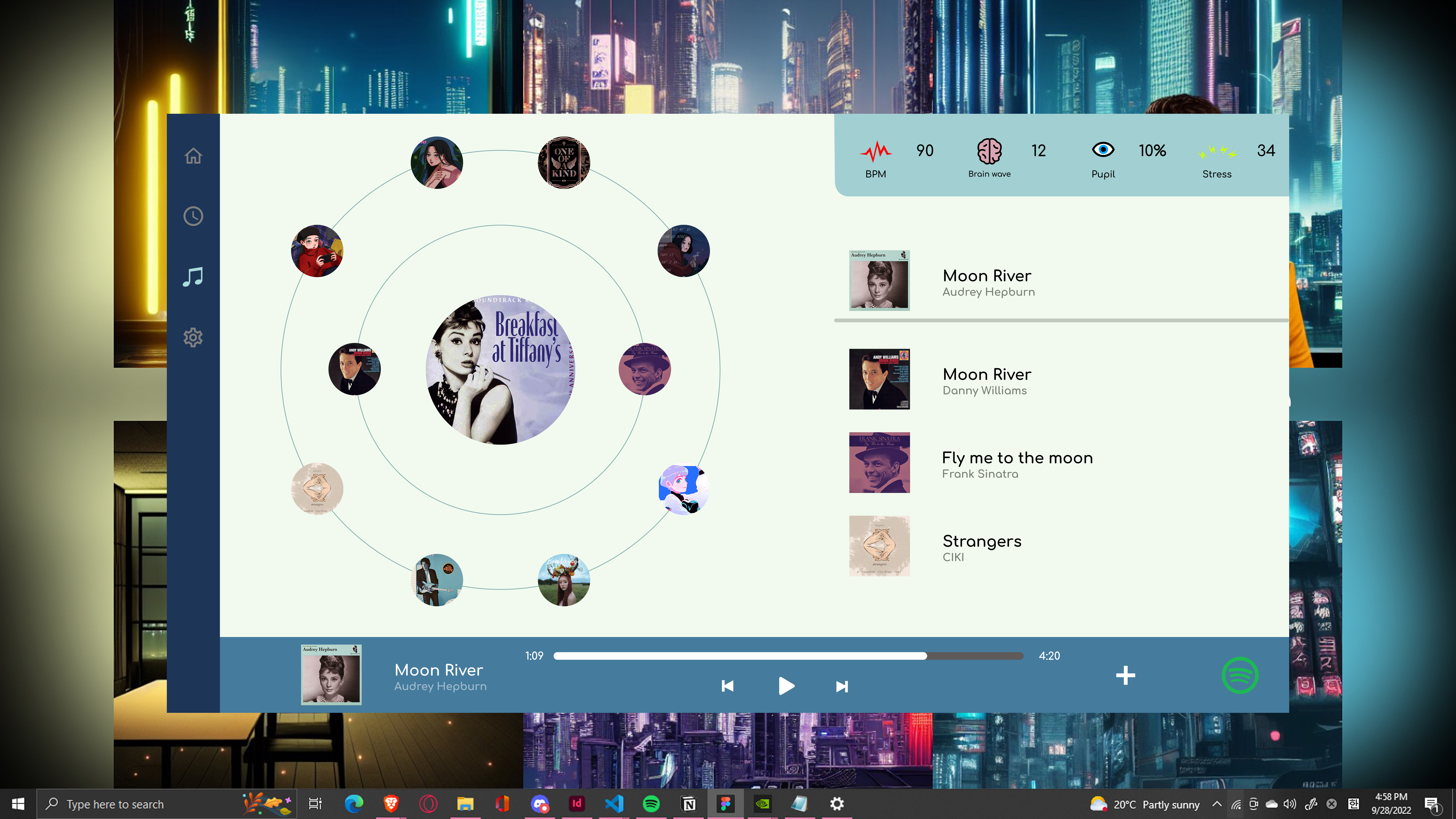

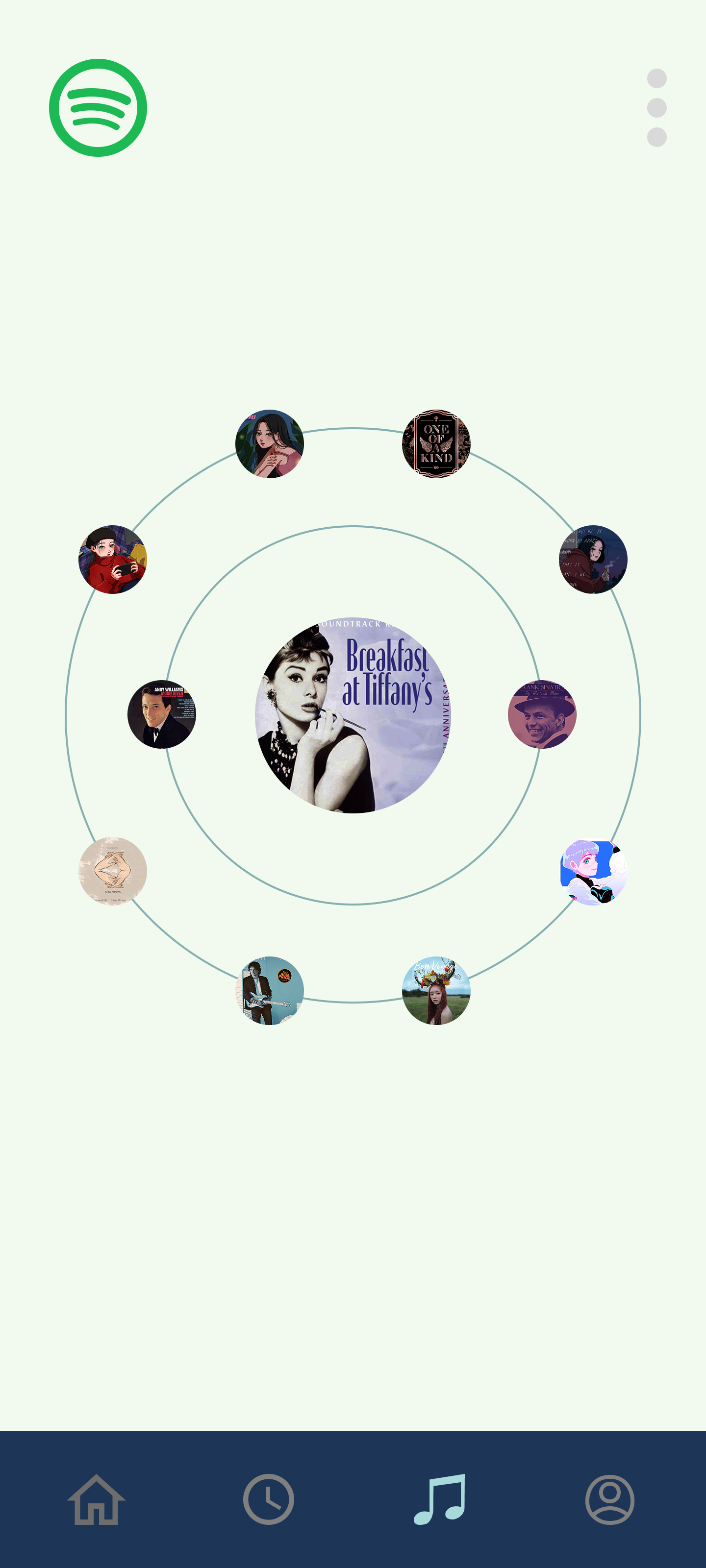

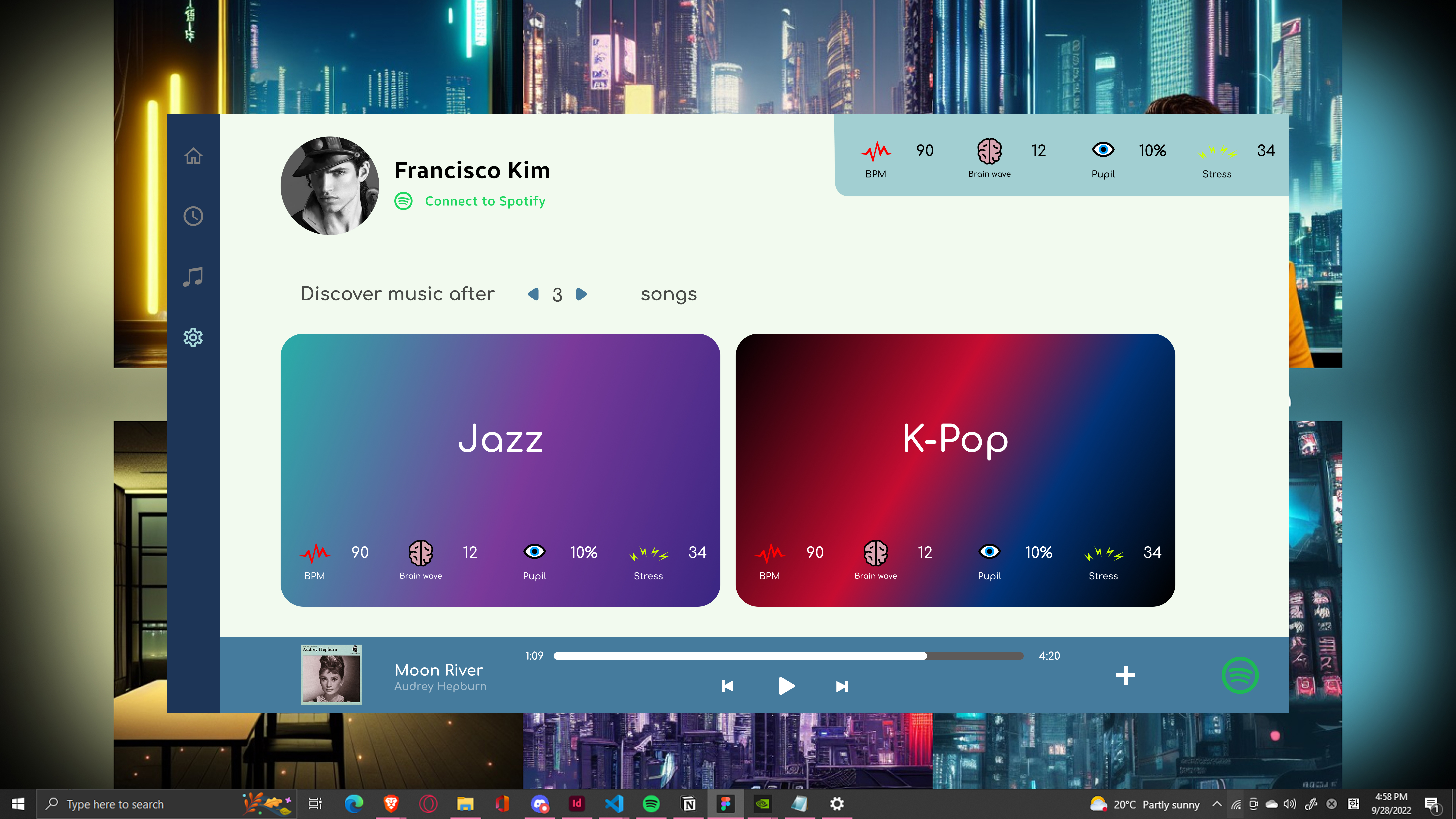

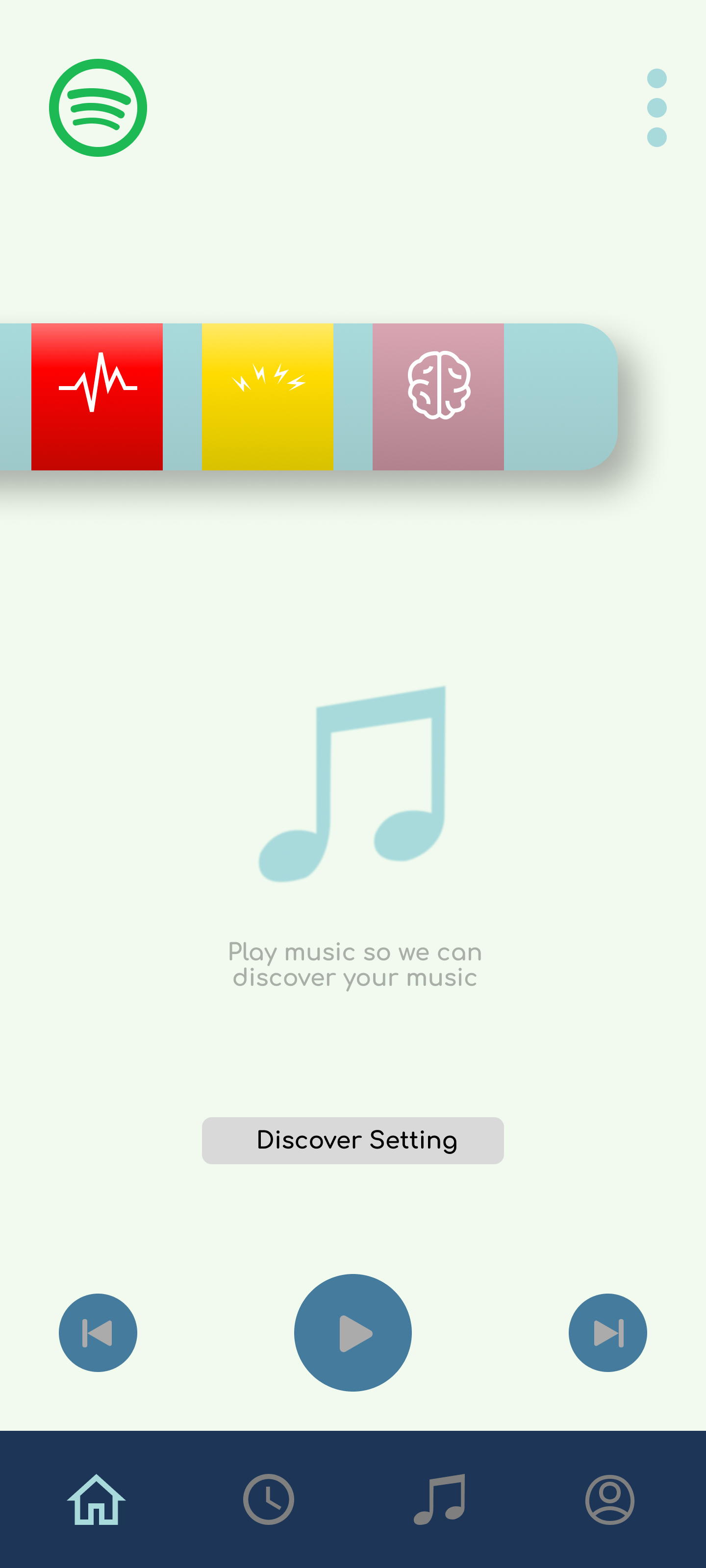

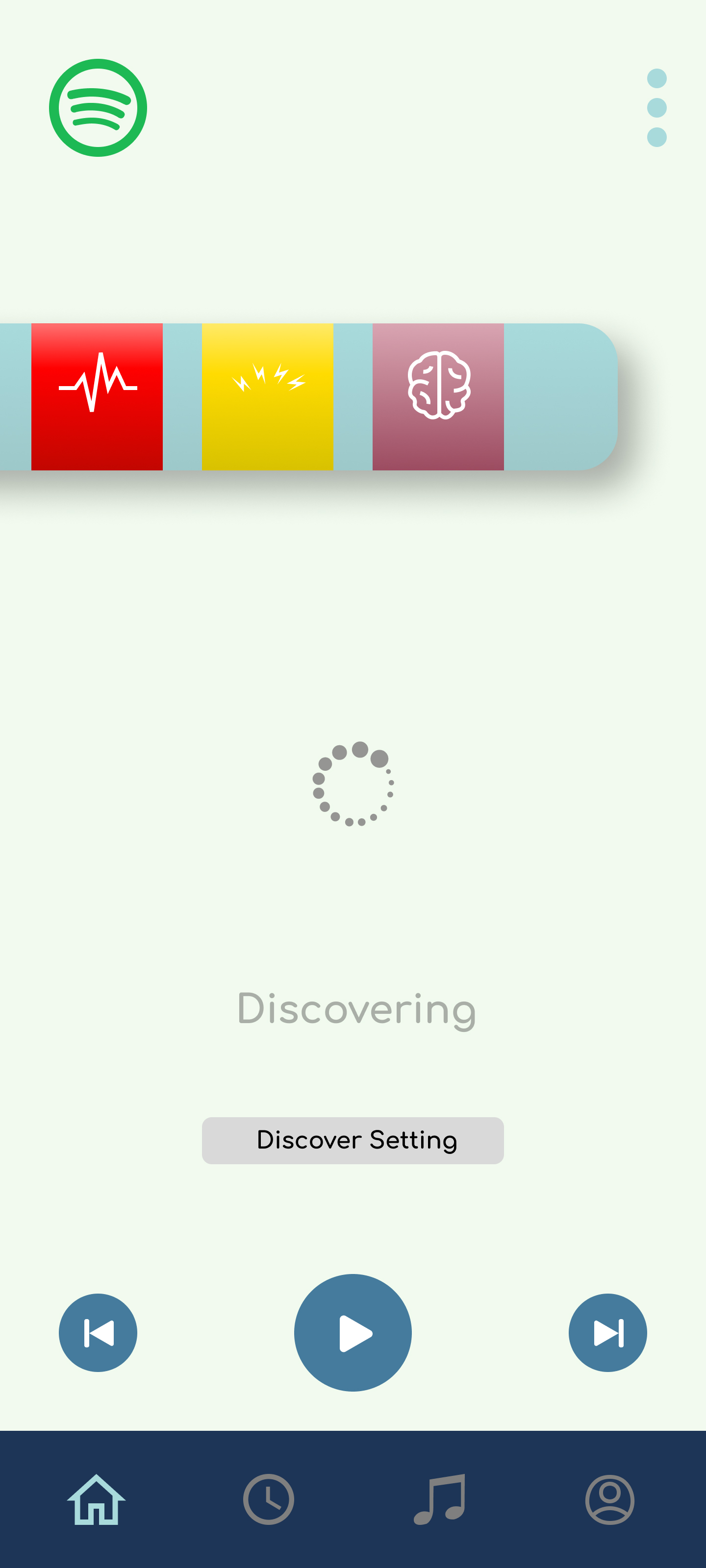

Three layers define the design framework. Signal: what is being read (biometric data). Surface: how it is communicated to the user (ambient visual treatment, not a data dashboard). Control: the immediate mechanism to pause, adjust, or disable biometric influence. The design challenge is making Surface feel ambient and non-intrusive while making Control feel accessible and safe. These requirements are in tension: surfacing the signal enough to build trust while not surfacing it so much that it feels surveillance-like. The resolution is: ambient signal display by default, explicit control always accessible, explanation at the moment of recommendation not in a buried settings screen.

Concept interfaces.

What this proves and what it doesn't.

- Transparency requirements for recommendation systems are well-documented; biometric systems face heightened requirements that are supported by academic literature and emerging regulatory frameworks (GDPR Article 22, AI Act provisions).

- Ambient visualization reducing data anxiety has precedent in health UX. Sleep tracking apps (Oura, Sleep Cycle) use ambient display patterns for similar reasons and have demonstrated user acceptance.

- Persistent, visible control placement for sensitive features is consistent with best practices from privacy UX research and emerging consent design standards.

- Whether population-level biometric-mood correlations translate to individual recommendation accuracy without personalized calibration. This is the core technical assumption that requires live sensor data to validate.

- Whether users would sustain biometric feature usage after novelty fades. Long-term adoption rate under ongoing passive data collection is unknown without live behavioral data.

The hardest thing I learned.

The ambient vs. explicit tension is harder to resolve than it appears. I spent most of the project trying to make the signal visualization "feel right" when the harder and more important problem was the control architecture. A biometric feature without a clear, immediate, persistent control is not a transparency problem. It is a consent problem. Getting the control architecture right is more important than any aesthetic decision made elsewhere in the interface. I should have started there and worked backward to the visual treatment. The sequence matters: consent architecture first, ambient expression second.